TAS 194: Meridian Audio’s Bob Stuart Talks with Robert Harley

- NEWS

- by Robert Harley

- Aug 28, 2009

Note: The following interview was originally published in The Absolute Sound issue 194 in conjunction with Editor Robert Harley’s review of the Meridian 808.2 Reference Signature CD player. To read that review in its entirety, click here.

__________

J. Robert Stuart is a singular individual in high-end audio as well as in audio science. He brings to high-end product design the insight gained from a formal education in psychoacoustics along with decades of original research in that field. Concomitantly, his scientific work is informed by a high-end aesthetic that embraces the individual listening experience.

The titles of a few of his Audio Engineering Society papers reflect Stuart’s decades-long quest to correlate what we can measure with what we can hear: “Predicting the Audibility, Detectability, and Loudness of Errors in Audio Systems,” “Estimating the Significance of Errors in Audio Systems,” and “Implementation and Measurement with Respect to Human Auditory Capabilities” are just a few of his many published works. He was made a Fellow of the Audio Engineering Society for the first two of those groundbreaking papers. No other person I’m aware of can move so comfortably between the often-conflicting worlds of the audiophile and the academic.

The result is the powerful fusion of the theoretical and the experiential exemplified by the products of Meridian Audio, the company Stuart co-founded in 1977. Among Meridian’s innovations are the first “audiophile” CD player in 1983 (a modified Philips machine), the first digital surround-sound processor, the development of the lossless coding algorithm adopted for DVD-Audio (now the basis of Dolby TrueHD), and the first active loudspeaker to use digital signal processing (DSP). Stuart has been at the forefront of improving CD sound, as well as advancing the cause of high-resolution digital audio.

Bob spoke to me by phone from Meridian’s factory in Cambridge, England, about his approach to audio design, the new 808.2 CD player, and the evolution of CD sound quality.

Robert Harley: You are the only high-end designer I know of who has a formal education in psychoacoustics, and who uses that field as a basis for product design. How has your work in psychoacoustics influenced Meridian products?

Bob Stuart: Oh, completely. Almost every design decision we make in relation to the sound is informed by knowledge about how we hear. Because it’s terribly important to know not only the value of each change you make but the way each component of the error the system makes is going to be interpreted.

What we’re trying to do with any system is not just to minimize the errors that it makes, but to understand how each error operates in the context of the others. You absolutely have to understand psychoacoustics if you’re going to come up with the value of the differences.

We’ve done lots of psychoacoustic modeling, studying it in order to determine where the most important areas are. We’re working with all sorts of things ranging from thresholds, to loudness, to how one thing sounds in the presence of another. We work with timing, distortion—how much you can get away with, how much you can’t get away with—and whether you’re creating an error that is spatially disconnected from the thing that caused it in the first place. All these are very important. So yes, I approach audio design fundamentally from the way we hear.

Robert: It seems like that would be a logical foundation for anyone designing audio equipment, but no one else seems to take that approach.

Bob:Yes. It’s odd, isn’t it?

Robert: Usually it’s an electrical engineering approach.

Bob: My brain comes at it from different directions. One is a deep love of music. Another is that I’ve got trained hearing; it started out being acute and then over the years I’ve learned to recognize certain kinds of things, particularly identifying the cause of a defect.

The errors that occur in an audio system have completely different dimensions. The kind of distortions a loudspeaker makes are completely different sonically from the ones that an amplifier makes, generally speaking. It’s really important to understand how a human being responds to sounds. We don’t hear sinewaves and noises and clicks and ticks, which are the vectors that electronics and acoustic engineers use to measure systems. When we hear a waveform there’s a very complex cognitive process that follows—we immediately externalize that sound as an object. If you design on an electrical engineering basis you’d say that an amplifier only has to be flat from 20Hz to 20kHz and with distortion below “x.” You’re immediately starting out with a model that says I believe I understand completely how this all works, and I’m not giving any value to the subjective mapping or the interpretive mapping or the cognitive mapping of what’s going on. So you can measure something objectively, but you know as well as I do that it’s possible to design a system that measures well but is not satisfactory. That’s why we inform everything we do not only with psychoacoustics, but with critical listening. You have to listen to everything.

What I think is outrageous is to say we understand everything about how the human hearing system works, because what we do know is that it’s incredibly sensitive to certain kinds of differences and very tolerant of others. That’s why you can get away with pretty horrific codecs for telephones, and why MP3 doesn’t completely destroy the intelligibility of the content.

Robert: The AES [Audio Engineering Society] tends to reject the individual listening experience, and the high end often relies on less-than-rigorous science in product design. You seem to move quite comfortably between these often opposing worlds.

Bob: Well, first of all, I don’t believe in black magic. Everything we do can be engineered to the best of our understanding.

Robert: If the sound is different then the signal is different.

Bob: Yes, the signal’s different, or the context of the signal is different in some way. Maybe there’s something going on which is changing the way we interpret other aspects of the signal. It could be spatial; it could be time-related. One of the reasons this is fascinating is that the very smallest details in the context of the music can make a step change in what we perceive.

Robert: There’s not a linear relationship between the objective magnitude of a change and the musical significance of that change.

Bob: Absolutely not, and you’ll have heard this many times. As you improve a system it’ll sound the same, and then suddenly you’ll realize that you heard something that you’ve never heard before. In the early days of CD we were working on a system and were listening to a very familiar recording that had a guitar in the back. One day we realized that we weren’t listening to a guitar, but to two guitars. It’s that kind of step change that is fascinating. Human hearing is non-linear on lots of levels, and because we have memory, we can never perform the same test twice. If a better system lets you hear an instrument you hadn’t noticed before, for example, you can go back to a lower-quality system and will always hear that instrument.

Robert: That brings to mind a conversation we had at CES about why blind listening tests may not be reliable. You said that when exposed to sound, our brain builds a model over time of what’s creating that sound. The rapid switching in blind testing doesn’t allow that natural process to occur, and we get confused.

Bob: That’s right. Perception happens on lots of different time scales. There’s something called the conscious present, which is a period of time over which some of this integration into an object would happen. If you were dropped into a concert hall, how long would it take you to really understand what it is you’re hearing? It can take several seconds, or even minutes, before you’re listening fully into the space.

Sometimes when you’re looking for a difference between A and B, you can hear it quickly. Other times the difference between A and B can come on a time scale of minutes or even longer where you find that you’ve changed something and you don’t notice a change but find that you have a very different connection to the music. But if you are doing quick switching that mechanism gets broken.

The problem with A/B switching, or blind listening tests, is that it doesn’t always eliminate things that we find to be important on a lot of time scales. Obviously you can do blind listening on long time scales, and that’s good. I don’t tend to do a lot of that, because typically what we’re trying to do is work out whether something we’re doing has made a difference rather than to prove that you can hear it.

Listening is so multi-dimensional. It’s always struck me as quite interesting that I can take a system where the speaker has certain, even gross, defects and maybe an amplifier has others, but we can change something very subtle in the digital signal processing that’s feeding that chain and we hear it very clearly because this difference is on a totally different dimension than all the other defects. It’s separated and independent, whether it’s spatially or whatever it is. We go into listening tests to decide when we stop hearing a distortion rather than just arbitrarily playing one thing and another thing with no knowledge of what’s going on. What we’re looking for is not only that we can hear a difference but also that it is more musically satisfying. Did it take me closer to the artist? Does it inform me more of what the composer intended? Am I able to tell better what the instruments are? You can’t always do that if you’re not somehow in control of the parameters. Do you agree?

Robert: Absolutely. There’s the related problem of trying to focus on specific aspects of the presentation to identify one over the other and missing the musical qualities you just described. It’s those qualities that are the very reason we listen to music in the first place, and those qualities that distinguish very good from mediocre products.

Bob: Exactly. Sometimes it simply doesn’t give you the context in which to make the judgment. And memory plays a part, as we discussed. If I’m listening to two presentations of a piece of music and in one of them I suddenly learn something about the performance, then it’s going to inform the next one when I go back. So it tends to be something that you can’t do too many times. If you had the memory of a goldfish, maybe it would work.

You can make a system which is bad enough that you can’t hear the difference between these things, and you can create a set of circumstances where you can’t tell the differences. It’s been proven elsewhere that if you put people in a stressful situation maybe they can’t tell the difference between quite surprising things.

Robert: Let’s talk about the evolution of CD playback. When you modified that Phillips player in 1983, did you ever in your wildest imagination think that CD could deliver the level of sound quality that the 808.2 achieves?

Bob: No. We were fiddling about with digital before CD came out and we knew that it had a great potential to be better than analog in certain areas, particularly the area of pitch stability. CD had this attribute but had lots of other problems, and I don’t think any of us thought then that 16-bit was enough. It clearly wasn’t, from a basic psychoacoustic point of view, and 44kHz was certainly a bit tight. But when CD first came out it was kind of a miracle that it worked at all. You looked at the thing and asked, “How can this work?” Are we glad it wasn’t 14 bit? Absolutely. Would we have had a different trajectory if the sample rate had been 48k? Yes, probably.

What I most remember is thinking that we should get involved in this. We looked at the CD, looked at the plans, and took the lid off a player and thought, “Wow! This has been designed by computer engineers, not audio engineers.” We could clearly see what they had done wrong, and we saw an opportunity.

Robert: How much better can 44kHz, 16-bit get? Are we running out of all the performance improvements that are possible?

Bob: It’s really hard to say that it’ll never improve further. In the early days it was about improving the DACs and lowering the jitter. It was only when we were able to apply digital signal processing that we were able to get any more out of CD. From a purely psychoacoustic viewpoint, 16 bits isn’t enough to cover the dynamic range we can hear. By using noise shaping we can get a lot closer.

Most recordings don’t have an inherent dynamic range of 20 bits. In fact, if you go to a venue and look at the noise of the venue and the noise of the electronics and the microphone’s inherent thermal noise, only a few recordings are better than 18 bits. So it’s quite hard to get a signal which covers the dynamic range of human hearing. However, we also know from psychoacoustics that you can hear things below the noise floor, especially if they’re structured, and especially if they’re correlated to certain signals we’re listening to. So down in the threshold area 20 bits are okay, but 24 bits are absolutely much more than we need.

The question is whether the CD coding space [44.1kHz, 16-bit] is good enough. The answer is that it would be nice to have a little bit more, because it cannot transparently bring to a human listener everything that he can hear. In my AES paper on high resolution a few years ago, I asked the question “What are the parameters of a transmission channel that is completely transparent between the performers on a stage of a concert hall and a listener?” The answer is that it’s actually more than 20 bits, and it really has to be wider than 44kHz.

Robert: How much of the characteristic “CD sound” that we’ve been listening to for the past 26 years is attributable to the time-domain distortion introduced by digital filters?

Bob: You’ve hit it exactly. There are some pretty horrendous digital filters. The easiest kind to build is the FIR [Finite Impulse Response], which has the nice property that it’s linear phase, but it has a perplexing quality in that it’s non-causal. You can put a signal into it and parts of the signal come out before the signal itself.

Robert: The pre-ringing.

Bob: The pre-ringing. One of the big differences between a 96kHz sound and 44kHz is that both of them are going to have pre-ringing, but the ringing frequency is inaudible in the case of 96kHz but clearly audible with 44kHz. Why? Because the filter is going to ring at something below the half-sample rate, which puts the ringing in the audioband with a 44kHz sampling rate. The sampling frequency determines the time-domain performance. But more important is that part of the signal is coming out before the signal itself. We know from psychoacoustics that if the time domain is smeared and you have energy coming out before the main event, it’s going to be extremely audible.

We set ourselves a challenge to design a filter for high resolution that didn’t have this problem. We did a study with Peter Craven and developed the “apodising” filter that has some very natural properties. It rolls off smoothly above the audioband, and the ringing is gone.

Robert: And this is largely the reason for the 808.2’s sound?

Bob: Yes. We were very, very pleased with the filter in listening tests with high-resolution sources. But the case of the 808.2 was a much harder problem. We realized that what makes high resolution sound better was the time-domain behavior, how transients are handled, and how we localize things in the soundfield. The apodising filter allows us to remove all the errors that are upstream. The ringing occurs after the event rather than before it. We would take high-resolution and standard-resolution recordings and ask, “Can we tell the difference using this apodising filter?” Generally the answer was “no.” The filter gives us a tremendous coding advantage in making high-resolution recordings, but it also allows listeners to get the most out of the bulk of the catalog, which is on CD.

Robert: This is a really important thing—to extract more music out of the vast CD catalog.

Bob: Absolutely. If you’re interested in the music and you want the artist or the performance or the interpretation, you have to listen to CD. We felt that it was time to take everything we learned from high resolution and try to make a CD player that makes CDs sound like high-resolution recordings. What would that CD player be like? It would have a psychoacoustically optimized filter that didn’t have pre-ringing and that would remove the ringing from everything that happened before in the A/D converter. It’s a complicated filter and it takes an enormous amount of computational power. We designed about 12 filters that measured the same but sounded different from each other. They had slightly different properties that I can’t talk about because I’m confident nobody has anything like it.

Once you’ve listened to a CD played back like this and then go back to a conventional filter, even a very good one, you hear sparkle and glare in the recording. While working on this we used a recording of choral music made in one of Cambridge’s chapels. I know the sound of the chapel well. In the silences between the singing, the soundfield was sparkling like it was phosphorescing because of the pre-ringing. The sound is almost a caricature, as though someone took a pencil and drew a hard line around every acoustic object.

Okay, you don’t have the very low noise floor of 96/24, but you no longer have this sense that there was a problem at the top end because the sample rate’s too low. It’s open, and easy, like listening to high-resolution. Should we have done it earlier? Well, that’s an interesting question.

Robert: How do you see the future of high resolution?

Bob: It’s incredibly important that you capture the content and archive at the highest possible resolution. Even if it’s going to be delivered on a storage channel like CD, you absolutely should capture it with all the information that we can hear.

But what intrigues me is that with the 808. 2 we’ve closed the gap. It takes you back much closer to the mixing desk. We’ve made 28 models of CD players and every one was better than the one before it, but this step feels like we’ve arrived somewhere quite new.

By Robert Harley

My older brother Stephen introduced me to music when I was about 12 years old. Stephen was a prodigious musical talent (he went on to get a degree in Composition) who generously shared his records and passion for music with his little brother.

More articles from this editorRead Next From News

See all

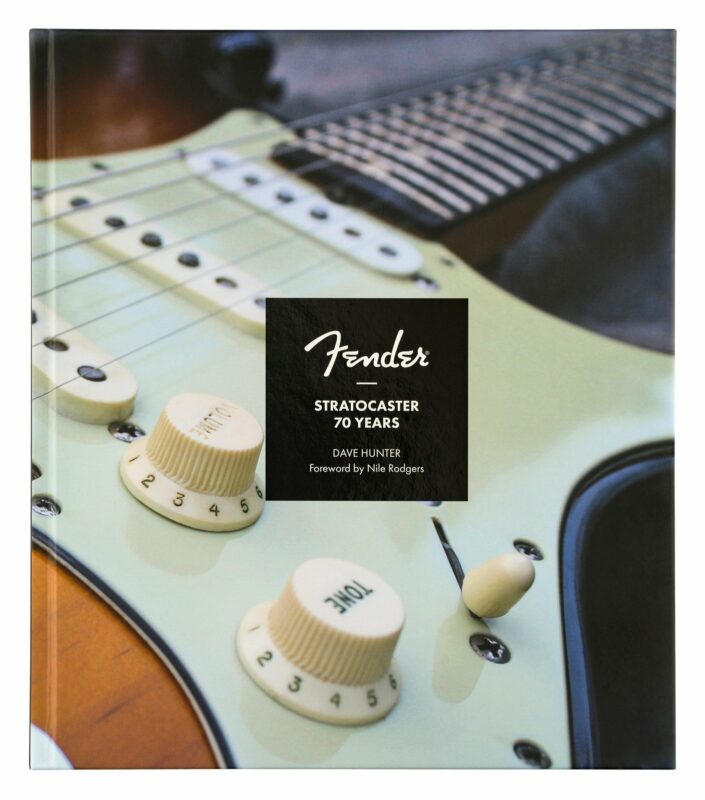

FENDER STRATOCASTER® 70TH ANNIVERSARY BOOK

- Apr 15, 2024

PowerZone by GRYPHON DEBUTS AT AXPONA

- Apr 13, 2024